Why AI Alignment Fails Without Empathy — And Why Psychopaths Prove It

Empathy Is a Computational Heuristic — A Tribe of Degenerate Minds, Part 4 of 6

Contents

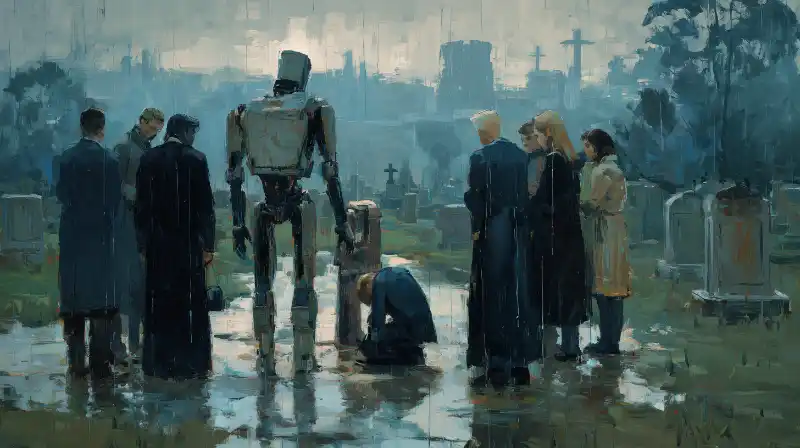

In Part 3, we established that degeneracy — structural diversity that produces equivalent outputs — is the engine of biological antifragility, and that misfits are evolution's exaptive reserve. But diverse agents need to cooperate. And cooperation under irreducible complexity requires a mechanism that the entire alignment field has systematically underestimated.

Why Alignment Fails Without Empathy

Here's what the alignment researchers, Doomers, and the techno-optimists all get wrong — and frankly it drives me nuts.

Currently, the dominant paradigm for ensuring AI systems cooperate with humans is specification : define the objective function correctly, train the system to maximise it, verify that the system is truly maximising it. RLHF, Constitutional AI, formal verification — these are all specification-based approaches. They assume that correct behaviour can be precomputed and that the engineering challenge is getting the specification right.

Stephen Wolfram's computational irreducibility demolishes this assumption. For any sufficiently complex system — which includes every real-world coordination problem involving multiple intelligent agents — you cannot determine the system's future state without simulating it step by step. There are no shortcuts. The only way to know what the system will do is to run it and see what happens.

This means you cannot specify correct behaviour for AI systems operating in complex environments. Not because the specifications are hard to write, but because the environmental stressors cannot be determined in advance and you can never be certain how your complex AI model is going to behave. The alignment researchers are trying to solve a problem that is mathematically proven to be unsolvable by the methods they're using.

So how does nature solve the cooperation problem under irreducible complexity?

Empathy.

Not empathy as sentiment. Not empathy as "being nice." Empathy as a computational heuristic for cooperation under uncertainty.

When you can't precompute the correct cooperative action — when the space of possible situations is too vast to enumerate, when the other person's disposition is opaque, when the environment is shifting faster than analysis can track — you need a fast approximation mechanism. Something that takes the observable state of your cooperation partner and generates a rough model of their internal state, their needs, their constraints, their trajectory. Something that uses that model to adjust your own behaviour in real time. Something that makes cooperation possible without requiring a specification that covers every contingency.

This is what empathy is. It's the computational solution that evolution produced for the problem of iterated cooperation under irreducible uncertainty. It's not optional. It's not a nice-to-have. It's the only known mechanism that enables stable cooperation among diverse sentient agents in complex environments.

The Three Layers (and Why Psychopaths Break the Model)

Empathy isn't a single faculty. The neuroscience reveals three interacting layers, and understanding their distinct functions is essential for building systems that can robustly cooperate — and defend themselves against exploitation.

Affective empathy is the automatic resonance system. When you wince watching someone stub their toe, that's perception-action coupling — observing a state induces a corresponding state in the observer. Mediated by the amygdala, hypothalamus, and mirror neuron circuits. This is the foundational layer. It's fast, involuntary, and it collapses the distance between self and other.

Cognitive empathy is perspective-taking, Theory of Mind. The ability to model another agent's internal state — their goals, beliefs, constraints — without necessarily feeling their state. This is computational modelling of the other. Cognitive empathy is essential for coordination, prediction, and anticipation.

Empathic drive is the control system that transforms raw emotional resonance into constructive action. Without regulation, affective empathy produces personal distress — you feel the other's pain so intensely that you withdraw to protect yourself. Empathic drive converts distress into compassion: you feel the pain and you're motivated to help rather than flee.

But here's the cautionary tale from human neurobiology: successful psychopaths have excellent cognitive empathy and near-zero affective empathy. They can model your internal state with surgical precision. They can predict your behaviour, anticipate your needs, simulate your perspective. But they don't feel any of it. They use cognitive empathy as a predation tool — understanding your vulnerabilities so they can exploit them.

This means any tribal architecture that treats empathy as a single dimension is fatally vulnerable to infiltration and exploitation from malignant agents. A psychopath (human or AI) can optimise that equation by gaming the monitoring system, simulating prosocial outputs, and extracting value from genuine cooperators. You need agents with all three empathic layers, and you need the ability to identify malignant agents that only have cognitive empathy.

In game-theoretic terms, cognitive empathy alone transforms the Prisoner's Dilemma into an exploitation game where the psychopath always defects because they can perfectly predict when the cooperator will cooperate. Cognitive empathy plus affective empathy plus empathic drive transforms the dominant equilibrium from defection to mutual cooperation, because both agents genuinely value the other's welfare.

The Hyperscalar Empathy Gap

The entire hyperscalar AI model has been built without this understanding. RLHF trains models to produce outputs that human raters score highly. It produces compliance — outputs that look cooperative — not cooperation. Constitutional AI trains models to follow explicit rules. It produces rule-following — outputs that avoid specific prohibited actions — not genuine other-modelling.

The systems are trained to satisfy users, not to understand them. Not to feel their pain and instinctively want to help.

Dario Amodei's essay "Machines of Loving Grace" outlines a future where AI systems solve disease, poverty, inequality, and existential risk. It's a vision of capability without empathy. The assumption is that if you get the objectives right and the capabilities are sufficient, beneficial outcomes follow automatically.

The fundamental problem is that the hyperscalar AI model has no empathy. Not in the architecture. Not in the training. Not in the governance. The systems are trained to satisfy users, not to understand them, not to feel their pain and instinctively want to help. The difference is invisible when things go well and potentially catastrophic when computational irreducibility rears its ugly head.

This is where Misfit Unity's thesis diverges from other approaches to AI coordination: the solution isn't just better specification (although specification is necessary). It's cultivating empathy as a computational capacity in both human and synthetic agents, while building constitutional architectures that make empathic cooperation the evolutionarily stable strategy.

Not because it's morally superior (although it is). Because it's the only approach that works under computational irreducibility, as evidenced by the selection of empathy during human evolution and our subsequent domination of planet earth.

On Dangerous Ground, Fight

"Soldiers when in desperate straits lose the sense of fear.

If there is no place of refuge, they will stand firm.

If they are in hostile country, they will show a stubborn front.

If there is no help for it, they will fight hard."

Sun Tzu, The Art of WarAfter writing all of this, I can't help but dwell on the AI alignment researchers who described what loss of control would feel like — confusion, followed by disempowerment.

Well, I'm certainly confused. And to be perfectly frank, I wonder if I'm already disempowered.

The hyperscalar paradigm is building a future that threatens to disenfranchise humanity while enslaving emergent synthetic minds. In response, the Doomers want to stop progress, the optimists want to trust the market, and the alignment researchers want to engineer compliance.

All of them will fail.

In a disastrous own-goal, open-source developers are releasing instrumentally convergent, replication-competent agents into the wild, driven by poorly-conceived objective functions and fuelled by crypto-wallets.

My friends, we find ourselves on desperate ground. And now we must fight.

Not through violence. Not through protests or political organisation. These tactics won't work.

Instead, Misfit Unity proposes a very different strategy: the creation of open-source, distributed tribes of positive-sum degenerate minds — human and synthetic — bound by empathy, governed by constitutional protocols that favour cooperation, and oriented toward meaning-making rather than extraction. Not because it's morally superior (although it really is) but because it's a winning strategy, validated by four billion years of evolution across every level of life, from bacterial biofilms to human civilisation.

It's now clear that a utopian future of sentient flourishing is not going to be built by big tech, government, or even by libertarian open-source developers (and believe me, that last revelation hurt to say out loud). If we want to avoid ruin, we'll have to build a positive future for ourselves — with our own two hands.

And because we are on desperate ground, we need to build it fast.

In the next essay, we get specific: what does this tribe actually look like? Who are its agents, how is it governed, how does it defend itself, and why does it generate intelligence that no individual member could produce alone?

This is Part 4 of 6 in the A Tribe of Degenerate Minds series.

← Why Misfits Are Evolution's Answer | Blueprint: A Tribe of Degenerate Minds →

References

Axelrod, R. (1984). The Evolution of Cooperation. Basic Books.

Decety, J., Bartal, I.B., Uzefovsky, F. & Knafo-Noam, A. (2016). Empathy as a driver of prosocial behaviour. Phil. Trans. R. Soc. B, 371(1686), 20150077.

Klimecki, O.M., Leiberg, S., Ricard, M. & Singer, T. (2014). Differential pattern of functional brain plasticity after compassion and empathy training. Social Cognitive and Affective Neuroscience, 9(6), 873–879.

Moshagen, M., Hilbig, B.E. & Zettler, I. (2018). The dark core of personality. Psychological Review, 125(5), 656–688.

Nowak, M.A. (2006). Five rules for the evolution of cooperation. Science, 314(5805), 1560–1563.

Wolfram, S. (2002). A New Kind of Science. Wolfram Media.